The rise of deepfakes, particularly those exploiting individuals for non-consensual pornography and spreading malicious misinformation, has become a pressing concern for policymakers and the public alike.

Recognizing the urgent need for action, the Biden-Harris administration has successfully secured voluntary commitments from leading artificial intelligence companies to implement safeguards against the creation and dissemination of harmful deepfakes.

This initiative marks a significant step towards mitigating the potential societal damage posed by unchecked AI-generated content.

White House Agreement

The White House's effort focuses on obtaining voluntary pledges from key players in the AI industry, including OpenAI, Anthropic, and Microsoft.

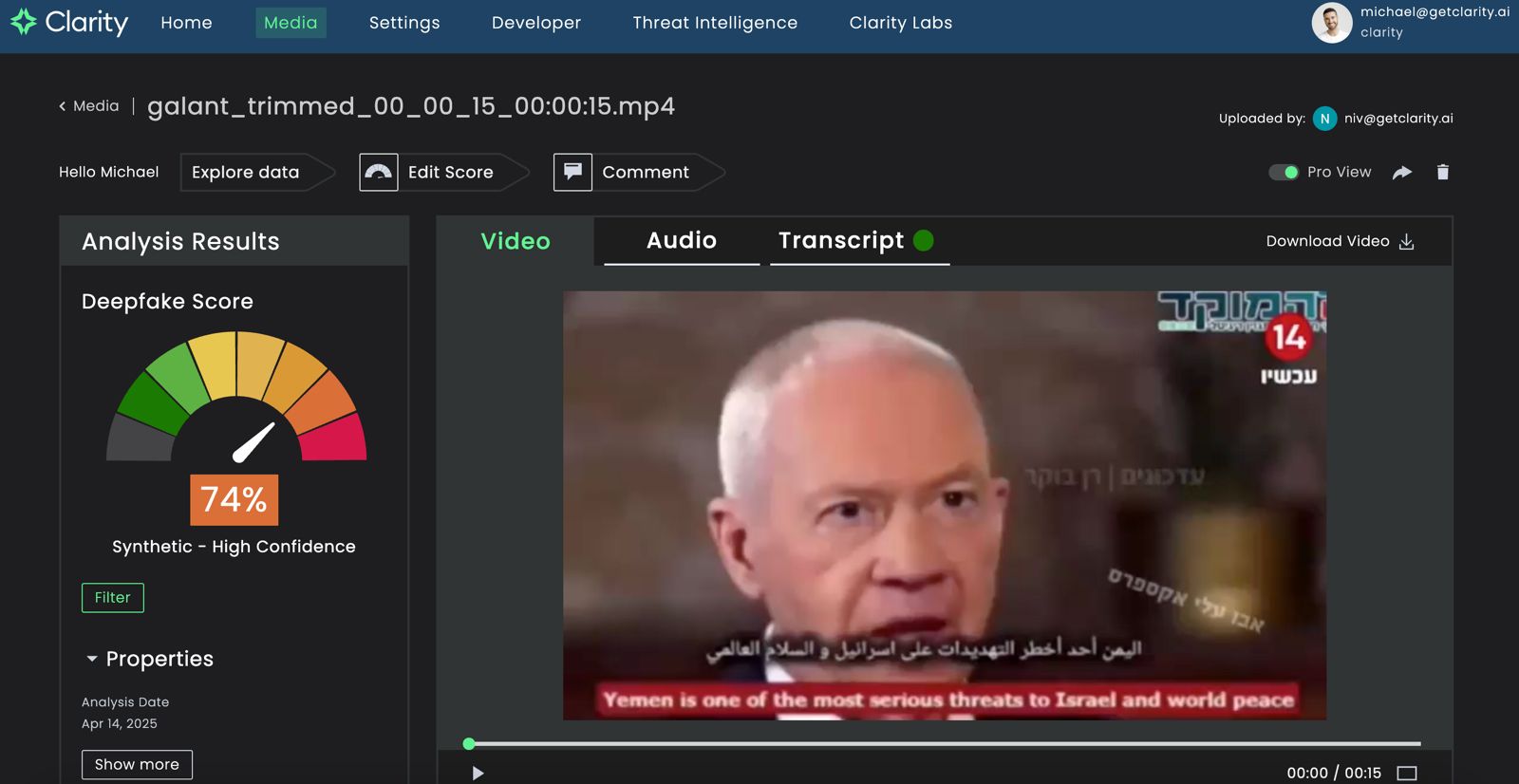

These commitments aim to address aspects of deepfake misuse: the creation of non-consensual intimate imagery, often referred to as deepfake nudes, and the spread of AI-generated misinformation.

The administration, led by figures like Arati Prabhakar, Director of the White House Office of Science and Technology Policy, has emphasized the urgency of these measures, highlighting the potential for deepfakes to erode trust in media, manipulate public opinion, and inflict emotional harm on victims.

The Core Commitments: Technical and Policy Safeguards

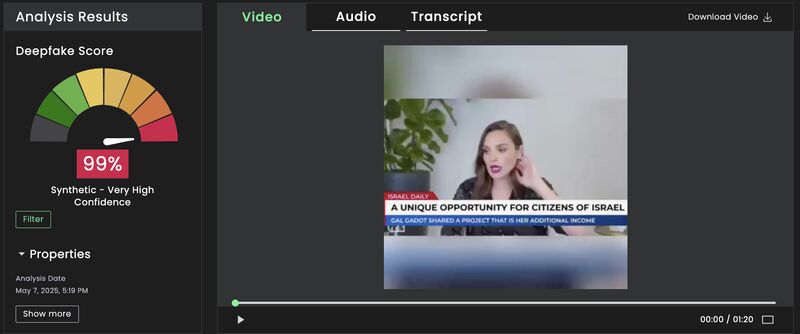

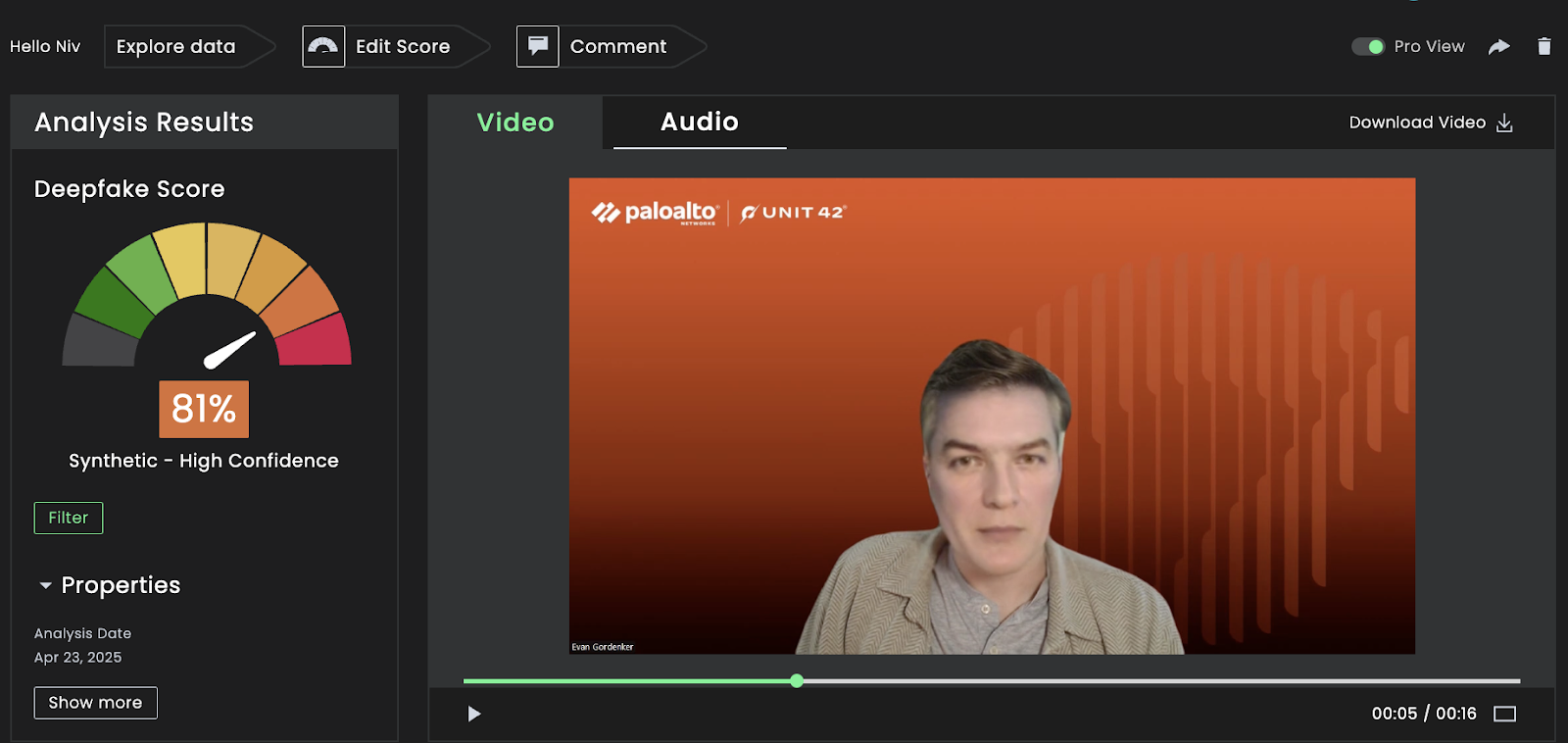

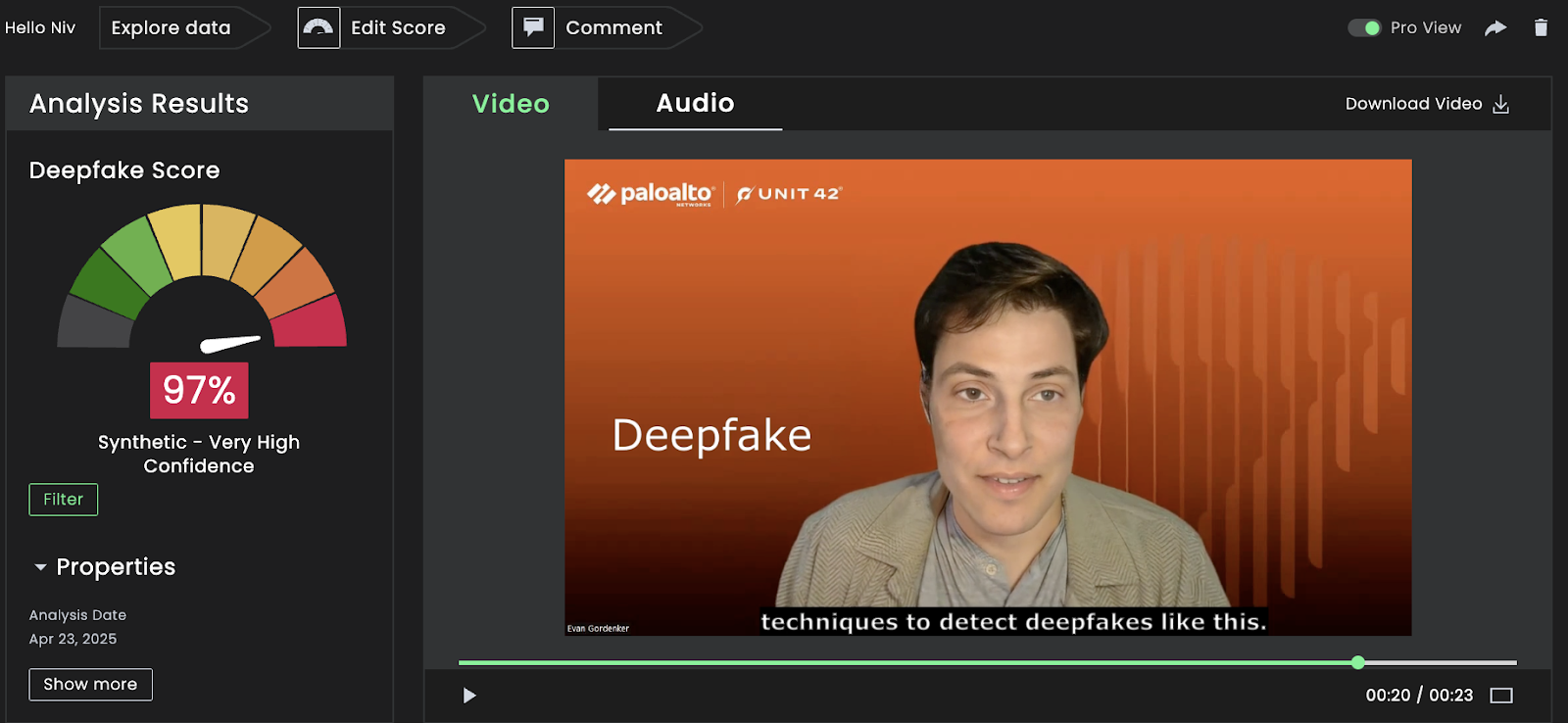

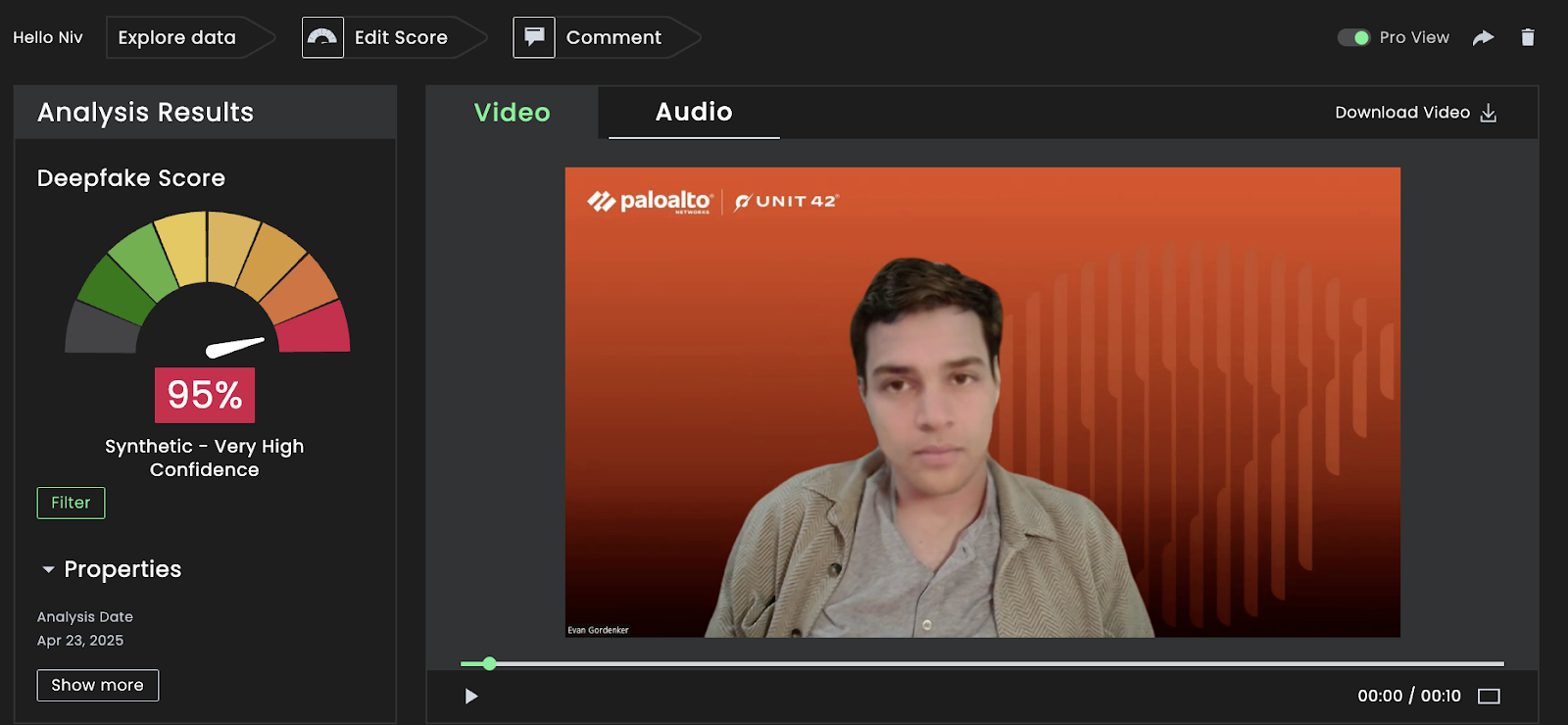

Voluntary commitments include a range of technical and policy-based safeguards. On the technical side, companies have pledged to develop and implement systems for watermarking or labeling AI-generated content, making it easier to identify manipulated media. They are also working to enhance detection algorithms capable of identifying deepfakes with greater accuracy. Crucially, these firms are taking measures to prevent the generation of deepfake sexual content by implementing filters and restrictions within their AI models.

Beyond technical measures, the commitments extend to policy and enforcement. Companies have agreed to strengthen their terms of service to explicitly prohibit the creation and distribution of deepfakes for abusive purposes.

They are also exploring avenues for collaboration with law enforcement and other agencies to address deepfake-related crimes. Furthermore, transparency and accountability are key components of the initiative.

Societal Impact and the Future of AI Ethics

The implications of these voluntary pledges extend beyond the immediate goal of curbing deepfake abuse. They have the potential to shape the future of AI development, striking a balance between innovation and ethical considerations.

By proactively addressing the risks associated with AI-generated content, these companies can foster greater public trust in the technology. However, the effectiveness of voluntary commitments remains controversial.

While they demonstrate a willingness on the part of industry leaders to address the issue, some argue that stronger regulatory measures may be necessary to ensure consistent and enforceable standards.

“Seeing big tech voluntarily agree on AI safety is encouraging – especially the pledge to watermark AI content. It’s a sign that both industry and government recognize the deepfake threat and are beginning to grow in the same direction to address it.”