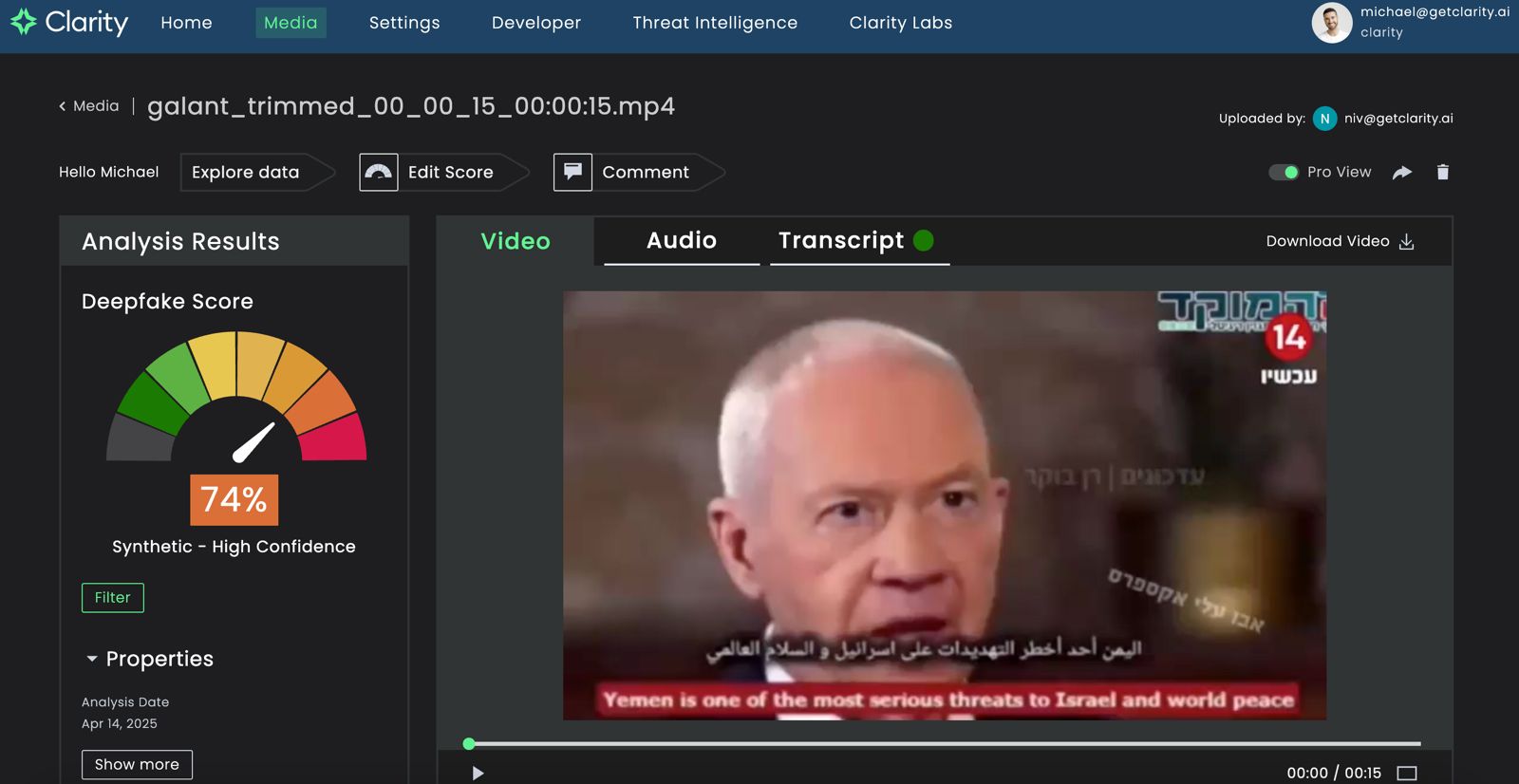

What is the Cloud Security AI Workbench?

The Cloud Security AI Workbench is an extensible cybersecurity platform aimed at improving threat detection, analysis, and response.

Built on Google Cloud’s Vertex AI infrastructure, the workbench offers enterprise-grade features like data isolation, protection, sovereignty, and compliance support. It integrates seamlessly with Google's existing security tools, including Mandiant Threat Intelligence and VirusTotal, both of which were acquired by Google in recent years.

Sec-PaLM underpins the workbench by incorporating extensive security intelligence, such as data on vulnerabilities, malware behavior, threat indicators, and profiles of threat actors. This allows the platform to provide actionable insights and human-readable summaries of complex security issues.

A Focus on Code

One of the standout features of the workbench is VirusTotal Code Insight. Using Sec-PaLM, this tool analyzes potentially malicious scripts and provides natural language summaries of code behavior to detect threats more effectively.

Another key offering is Mandiant Breach Analytics for Chronicle, which alerts organizations to active breaches in real-time and offers contextualized responses to findings using Sec-PaLM.

The Security Command Center AI delivers near-instant analysis of attack paths and impacted assets while providing recommendations for mitigation and compliance. Additionally, Assured OSS enhances open-source software vulnerability management by helping organizations proactively address risks in their software supply chains.

Addressing Industry Challenges

Google’s Cloud Security AI Workbench is designed to tackle several pressing issues in cybersecurity.

With an increasing number of sophisticated attacks, security teams often face overwhelming amounts of data. Sec-PaLM helps prioritize actionable threats by summarizing and contextualizing information.

Cybersecurity also faces a significant skills gap. Generative AI tools like Sec-PaLM aim to augment human expertise by automating routine tasks and providing insights that would otherwise require extensive manual analysis.

Many organizations struggle with fragmented security solutions, but the AI Workbench integrates multiple functionalities into a unified platform, simplifying workflows for security professionals.

How Does Sec-PaLM Compare?

Google’s announcement comes shortly after Microsoft introduced its own generative AI-powered cybersecurity tool, Security Copilot, based on OpenAI’s GPT-4.

Both platforms aim to enhance threat intelligence and response capabilities through conversational interfaces and natural language processing. However, Google emphasizes that Sec-PaLM is deeply rooted in years of foundational research by Google and DeepMind, offering a bespoke solution tailored specifically for cybersecurity use cases.

While these developments signal a promising future for AI in cybersecurity, experts caution against over-reliance on such tools.

Generative AI models are not immune to errors or vulnerabilities like prompt injection attacks, which could lead to unintended behaviors. Additionally, the effectiveness of these tools depends heavily on proper implementation and expert oversight.

Looking Ahead

As Google plans to roll out the Cloud Security AI Workbench gradually through trusted testers before broader availability, organizations have an opportunity to evaluate how these capabilities might integrate with their existing security frameworks. Early feedback from adopters like Accenture suggests potential for meaningful operational improvements, particularly in threat detection and analysis workflows.

The emergence of specialized security LLMs like Sec-PaLM represents an important evolution in enterprise cybersecurity tooling. However, successful implementation will require more than technological adoption. Organizations will need to develop appropriate governance models, establish clear processes for human oversight, and ensure security teams receive proper training to effectively leverage these new capabilities.

The race between Google's Sec-PaLM and Microsoft's Security Copilot also highlights how competitive the AI-powered security market is becoming, potentially accelerating innovation while giving enterprises more options to enhance their security postures through specialized AI assistants.